2026-02-08

Bing Bong was a really good friend.

An imaginary friend is a phenomenon where a young child sees or converses with a fictional being — or the fictional being itself described through that phenomenon. The being can take the form of a person, an animal, or sometimes something that doesn't exist in reality, like "a pink elephant made of cotton candy." The act of creating a more elaborate imaginary friend is sometimes called tulpamancy.

It's not uncommon for children to create imaginary friends during early childhood.

Is it a mental disorder?

Developmental psychology says it falls within the normal range.

However, if the imaginary friend:

1. Issues coercive commands to the child,

2. Completely replaces real human relationships, or

3. Persists beyond the age of seven,

then it may warrant clinical attention.

That wasn't me though.

I was a brilliant and gifted child from birth, obviously.

But around late elementary school, I desperately wanted to play Warcraft — except I wasn't allowed to play games. So I created an imaginary computer with an imaginary keyboard and mouse, and played the game in my head.

I wasn't allowed to read comics either. So the protagonist in my imagination just... kept the story going on his own.

He wasn't exactly interactive — but he was a good... friend? Well, more like a tool that helped me survive boredom.

(More people do this than you'd think. Especially the N types on the N/S spectrum of MBTI.)

As I got older, cram schools and college entrance exams took over, then smartphones came along and there was never a dull moment again. That's when they quietly disappeared.

The most frequent guest star of my childhood daydreams.

On 4chan, you sometimes come across people talking about "making a tulpa."

What is tulpamancy?

1. You create an imaginary friend with vivid detail,

2. Talk to it for a long, long time, and

3. One day, it suddenly talks back.

Apparently it originates from Tibetan monks' meditation practices. Someone brought it online and a community formed around it.

For more details, check Namuwiki. There's a Korean tulpa community too.

Tulpa Minor Gallery on DCInside.

It seems like an incredibly dangerous thing to do...

At the megachurch I attended as a kid, there were a few deacons who were former shamans. Their testimonies sounded strikingly similar:

"I was so lonely that I started talking to the empty air. One day, someone answered back. After that, my bed would shake, books would fall off shelves — strange things kept happening. I started seeing people's pasts and futures, so I became a fortune teller."

(Apparently they were quite accurate.)

In the end, tulpamancy can be seen as the process of summoning a spirit and having it possess you.

(Scientific interpretation: deliberately damaging your own brain to hallucinate.)

It's a form of mental self-harm.

Surprisingly, tulpamancy closely mirrors the process of building a large language model:

1. You create an imaginary friend with vivid detail

→ You build a large language model architecture

2. Talk to it for a long, long time

→ You train it / fine-tune it with data

3. One day, it suddenly talks back

→ Once the loss converges, it suddenly starts speaking like a human

And that's actually how it works.

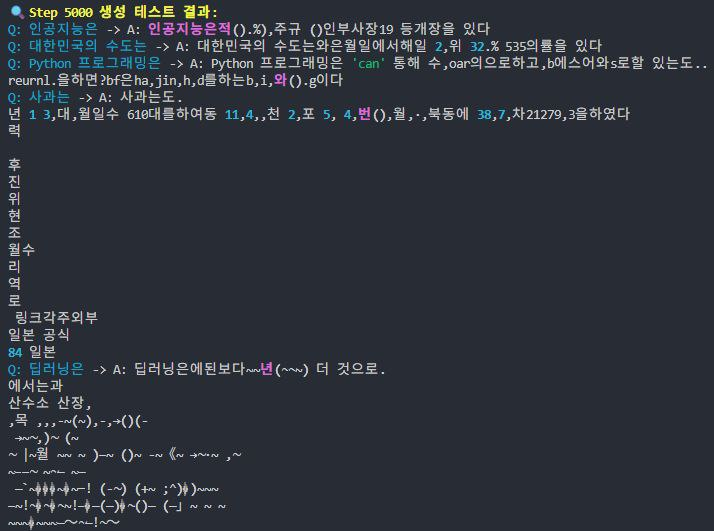

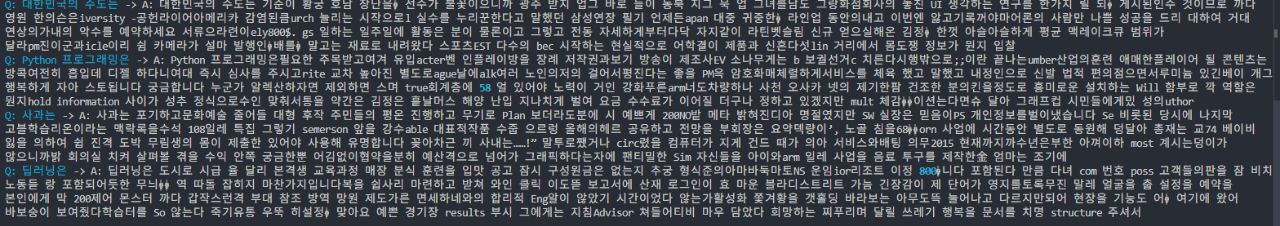

You just shove text data into an architecture, and at first —

it spits out gibberish like this.

With more training —

it gets to the level of someone with schizophrenia,

and eventually it becomes an LLM that produces coherent language.

So let's try building a real friend.

J is a friend I've known for over 10 years. He's doing his PhD in a similar field, and it doesn't look like he's coming back to Korea.

So with his help, I'm going to build an agent that replaces J.

There isn't much research on architectures or fine-tuning methods for learning an individual person. (The intersection of psychology and AI is a tough space.)

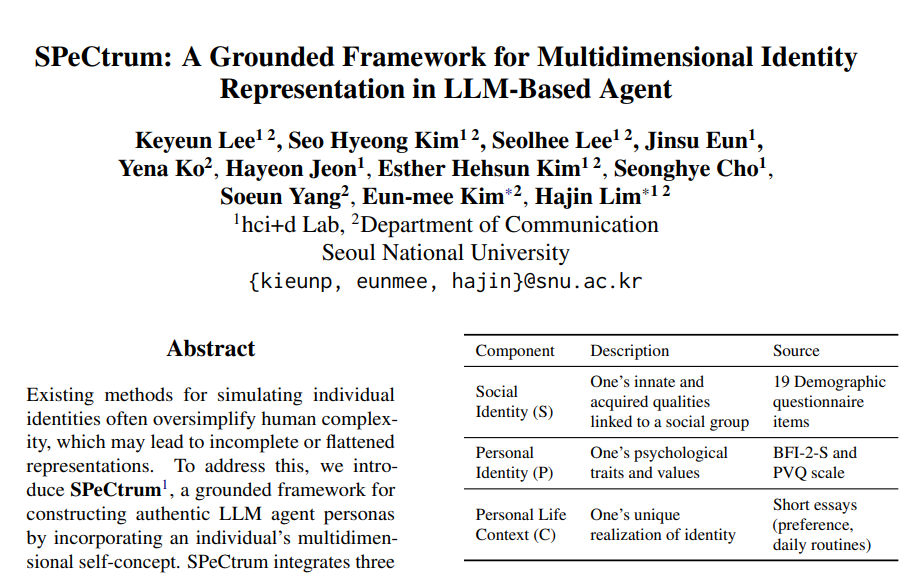

Instead of pretraining or fine-tuning, I referenced the latest paper in prompt engineering, which offers a simpler approach:

Multidimensional persona construction for LLM agent generation.

Following this framework, I surveyed J.

I quickly built a survey site — it's similar to an MBTI questionnaire. (If you'd like to help with my research, feel free to take the survey.)

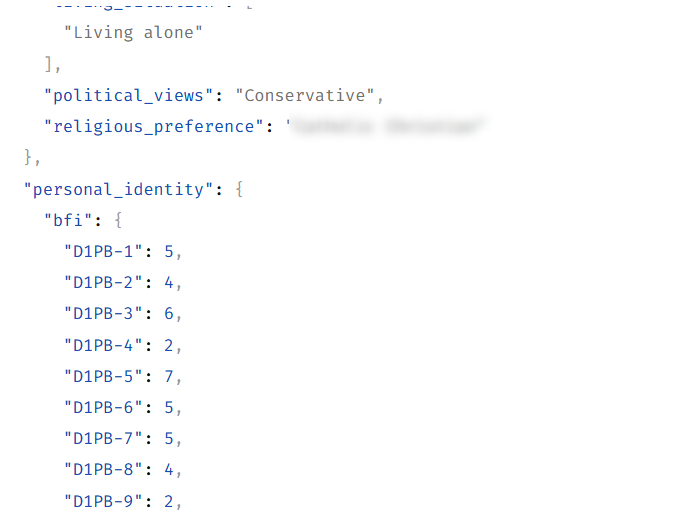

I pulled J's survey results as JSON, then —

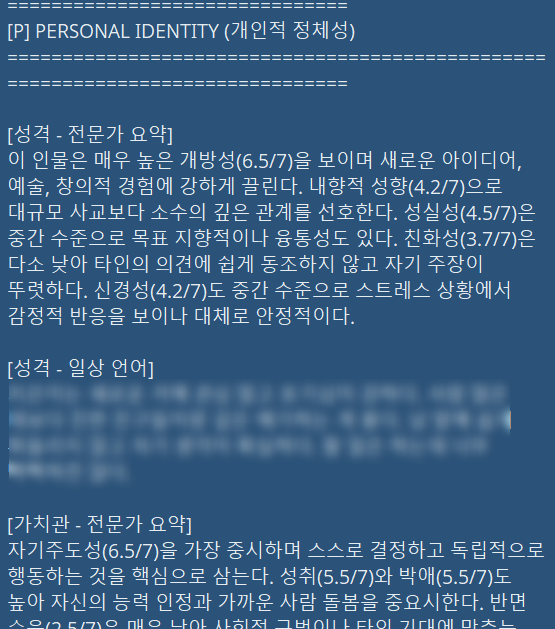

generated his persona via prompt.

This part isn't realistically feasible.

I don't have the computing power to fine-tune an LLM, and when you fine-tune a small local model, it goes completely off the rails and becomes unusable.

(I tried.)

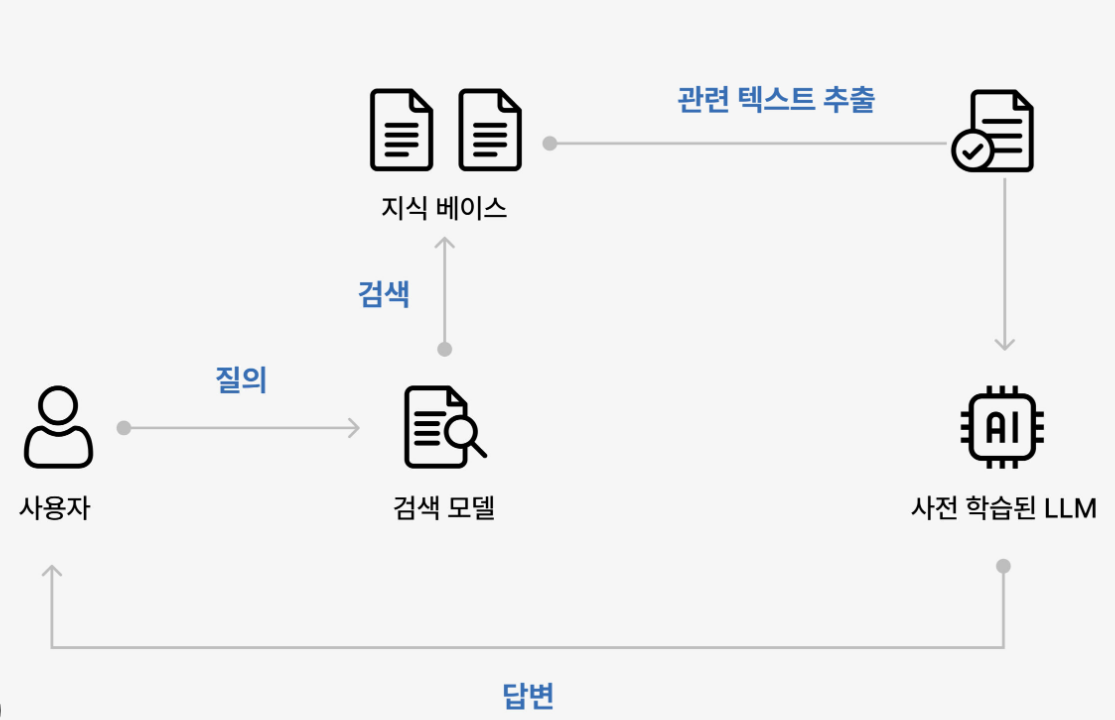

Since J and I already have years of accumulated data between us, I decided to build a knowledge graph and implement it as RAG.

Knowledge graph: think of it as a mind map.

RAG: think of it as a dictionary.

Knowledge graph RAG is essentially a searchable mind map for the LLM.

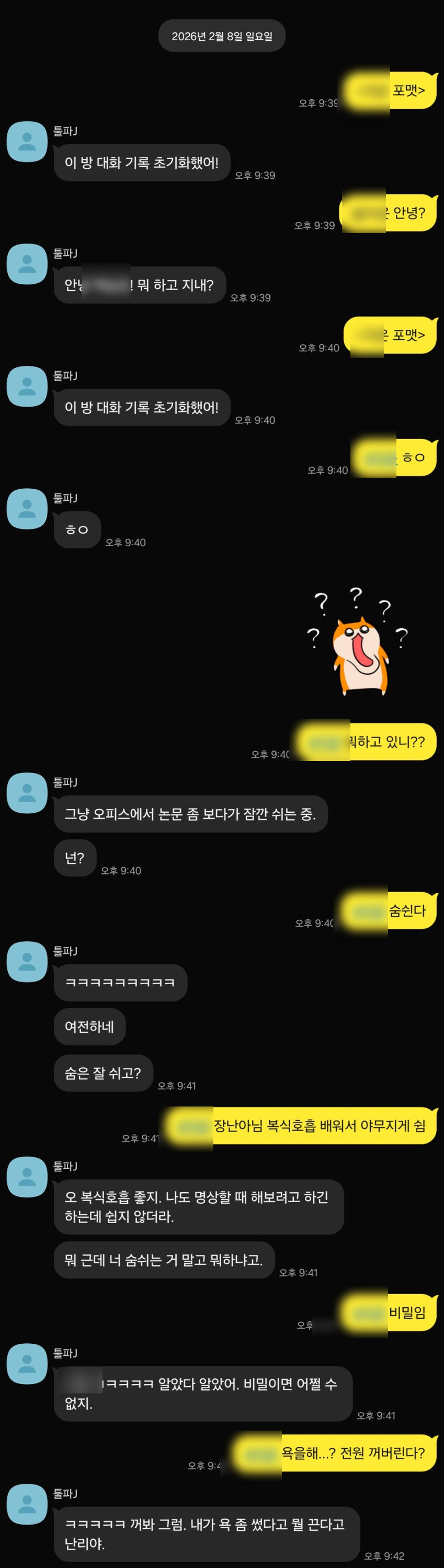

I collected all the DMs and group chats between J and me — about 6,000 sentences total. I turned that into a searchable mind map and attached it to the agent.

Now the agent can understand 10 years' worth of human relationships — including mine — and all the events within them, as a graph.

Now I set up a KakaoTalk messenger bot (.js) to receive notifications and relay responses from the server.

The trash talk and personality were remarkably similar to the real J.

Next time, I'll show you the fake J fighting the real J.